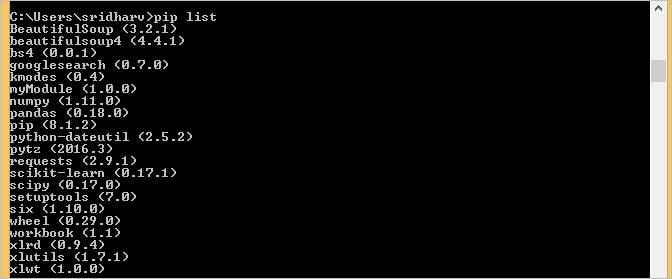

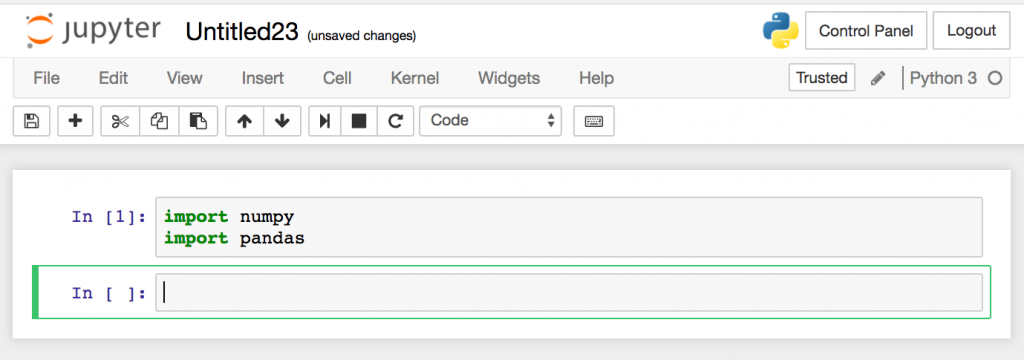

However, we can define our own alpha range from 0 to 1 by increments of 0.01: #define predictor and response variables Note: The term “alpha” is used instead of “lambda” in Python.įor this example we’ll choose k = 10 folds and repeat the cross-validation process 3 times.Īlso note that LassoCV() only tests alpha values 0.1, 1, and 10 by default. Next, we’ll use the LassoCV() function from sklearn to fit the lasso regression model and we’ll use the RepeatedKFold() function to perform k-fold cross-validation to find the optimal alpha value to use for the penalty term. The following code shows how to load and view this dataset: #define URL where data is locatedĭata = data_full]ĥđ8.1ē.460Ē.76Ē0.22đ05 Step 3: Fit the Lasso Regression Model We’ll use hp as the response variable and the following variables as the predictors: model_selection import RepeatedKFold Step 2: Load the Dataįor this example, we’ll use a dataset called mtcars, which contains information about 33 different cars. Step 1: Import Necessary Packagesįirst, we’ll import the necessary packages to perform lasso regression in Python: import pandas as pdįrom sklearn. This tutorial provides a step-by-step example of how to perform lasso regression in Python. In lasso regression, we select a value for λ that produces the lowest possible test MSE (mean squared error). This second term in the equation is known as a shrinkage penalty. Where j ranges from 1 to p predictor variables and λ ≥ 0. ŷ i: The predicted response value based on the multiple linear regression modelĬonversely, lasso regression seeks to minimize the following:.y i: The actual response value for the i th observation.In a nutshell, least squares regression tries to find coefficient estimates that minimize the sum of squared residuals (RSS): Lasso regression is a method we can use to fit a regression model when multicollinearity is present in the data.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed